The Sonicom Ecosystem: A Community Platform for the Future of Sound

Imagine stepping into a virtual concert hall or attending a virtual meeting and hearing it exactly as you would in real life. Sounds arrive from precise directions, bounce off walls, and are subtly shaped by the unique way your head and ears interact with sound. This is the promise of spatial audio: bringing realistic, personalised sound to real‑world and virtual experiences

For years, researchers around the world have been assembling pieces of the puzzle: carefully measured head‑related transfer functions (HRTFs), spatial room impulse responses, motion‑tracking data, specialised recording setups, and bespoke software tools. These resources are scientifically valuable, but they have typically been scattered across different repositories and websites, making them hard to explore, understand, or reuse, especially for anyone outside a small expert group.

That fragmentation has now changed.

A New Home for Spatial Audio Research

The SONICOM Ecosystem is a dedicated, open, and interactive platform designed specifically for research in spatial hearing and personalised binaural audio. It brings together high‑quality data, software tools, and clear documentation in one community platform, making advanced audio research more accessible, reusable, and transparent than ever before.

The Ecosystem is a major output of the EU‑funded SONICOM project, which investigates how we experience sound socially in augmented and virtual reality, from online meetings to immersive gaming. The development of the Ecosystem was led by Dr Piotr Majdak at the Acoustics Research Institute of the Austrian Academy of Sciences (ÖAW).

“The SONICOM Ecosystem is a data repository for researchers in the field of spatial hearing and binaural audio. With the unique feature of visualising the data on the fly, it clearly stands out from the many general-purpose repositories. It can store tools and databases with a clear structure. That structure helps when browsing through the data, selecting the needed one for own use, and later automatic access via apps. With the integration with ÖAW Datathek, it provides persistent DOIs, ensuring sustainability and easy access world wide. The various levels of data visibility, from private to persistently published, facilitates the researchers’ typical workflow when publishing research articles accompanied by data.

The SONICOM Ecosystem provides commenting functionality, facilitating interaction between data users and providers.”

Importantly, the Ecosystem does not only store files, but it also hosts tools, software, and models for researchers to use, and shows clearly how these tools connect to the data. This means users don’t just download information and figure it out later, they can explore it, understand how it works, and easily plug it into their own projects or applications.

Data can be kept private while work is ongoing, shared openly with others, or permanently published so it can be cited in scientific papers. Each published dataset gets DOI, so it can be reliably found and used years into the future. This makes the SONICOM Ecosystem a trustworthy, long‑term resource for researchers, developers, and anyone working with spatial audio.

Why the SONICOM Ecosystem matters

Spatial audio is becoming central to many areas of technology and research, including:

- Virtual and augmented reality

- Gaming and immersive media

- Hearing aids and assistive listening devices

- Audio engineering and auditory neuroscience

But progress depends on three things working together:

- High‑quality, standardised data, such as HRTFs, spatial room impulse responses, source and sensor directivities, headphone responses, motion‑tracking data, and annotated binaural recordings

- Robust software tools and models, including measurement toolboxes, simulation frameworks, auditory models, and neural network weights used in spatial‑audio processing

- A shared scientific environment where researchers can collaborate, comment on datasets, and build directly on each other’s work

The SONICOM Ecosystem was designed specifically to bring these components together in one coherent platform.

What the Ecosystem Offers

A Curated Library of Spatial‑Audio Databases

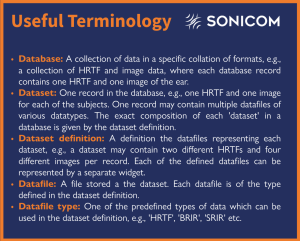

As of today, the Ecosystem hosts 37 databases, each carefully organised so they’re easy to explore and understand. Databases consist of datasets, which in turn contain data files of specific types. All content is clearly described with simple explanations of how the data was recorded, what equipment was used, how it can be shared, and how it connects to other resources on the platform.

The databases cover a wide range of spatial‑audio data, including:

- Head‑related transfer functions (HRTFs)

- Spatial room impulse responses (SRIRs)

- Directivities (Loudspeaker, microphone, musical instruments)

- Headphone impulse responses and/or equalisation filters

- Speech and binaural recordings

- Movement and motion‑tracking data

- Supporting materials such as images, 360° images, and geometric meshes, text, tables

This structured approach makes datasets easier to browse, compare, and reuse, even for researchers new to the field.

Explore Data Before You Download It

One of the Ecosystem’s defining features is its dynamic visualisation widgets, which allow users to inspect data directly in the browser before downloading anything. Depending on the data type, users can explore:

- Three‑dimensional HRTF representations

- Spatial room impulse response plots

- Interactive 360° imagery

- Directivity “balloons” for sources and sensors

- Interactive 3D geometric meshes used in simulations or measurements

This immediate visual feedback supports quality control, faster understanding, and more informed reuse of data.

Tools That Connect Data and Practice

Beyond databases, the Ecosystem currently hosts 14 tools, including:

- Software for acoustic measurements and simulations

- Auditory and perceptual models

- Neural networks for spatial‑audio processing

- Scripts for analysis, statistics, and figure generation used in publications

What makes these tools especially useful is that they are directly linked to the relevant data on the platform. Instead of downloading files in isolation, users can see which tools work with which datasets, creating a connected and easy‑to‑navigate environment. The Ecosystem also allows external apps and software to access its data automatically, making it valuable not only for academic research but also for use in real‑world audio products.

As Piotr Majdak explains:

“The programmatic interface provides access to the data from software applications, enabling the usage of the repository data in arbitrary apps provided by audio industry.”

Designed for Contribution and Reproducibility

To help ensure high scientific quality, only verified researchers can contribute to the SONICOM Ecosystem. Contributors follow a clear, step‑by‑step process that reflects real research practice: they register using their ORCID researcher ID, describe their work using standardised metadata, define what each dataset contains, and upload their files either individually or in larger batches.

Databases can move through several visibility stages:

- Private (during creation and revision)

- Public within the Ecosystem

- DOI assigned (modifications allowed, but with a permanent link)

- Persistently published and locked for long‑term reference

This staged approach mirrors real research practice and supports transparent, reproducible science.

A Platform with Lasting Impact

The SONICOM Ecosystem was conceived not just as a project deliverable, but as a lasting infrastructure for the community.

Principle Investigator of SONICOM, Prof Lorenzo Picinali from Imperial College London, highlights its broader significance:

“SONICOM includes a dedicated work package aimed at extending engagement beyond the consortium and ensuring long-term impact beyond the project’s duration. The SONICOM Ecosystem is a central outcome of this effort, supporting both active external engagement and sustained post-project impact. It stands as a major milestone not only for SONICOM, but for the wider spatial acoustics and immersive audio community. This approach sets a precedent for future projects and could evolve into a standard expectation for research initiatives in this field.”

Open source, community‑driven, and built for the long term, the SONICOM Ecosystem lays the groundwork for the next generation of personalised audio technologies.

Explore the Ecosystem at: https://ecosystem.sonicom.eu

Dr Piotr Majdak presenting the SONICOM Ecosystem at DAGA 2026